|

the join is operating on the shard keys). In addition, our query optimizer can often accelerate joins and group bys by pushing down the work into each partition (i.e. Because we use a fixed set of partitions, every node in our distributed architecture can easily determine where a given row lives (assuming it's not randomly sharded) without coordination.

We allow the schema to specify how table data is mapped to each of those partitions via a specified shard key, or the user can decide to randomly shard the data. It achieves that by splitting up the data into a fixed set of partitions. SingleStore is unlike many relational databases in that it is scale out. Jump to the end of this post if you want to learn more.

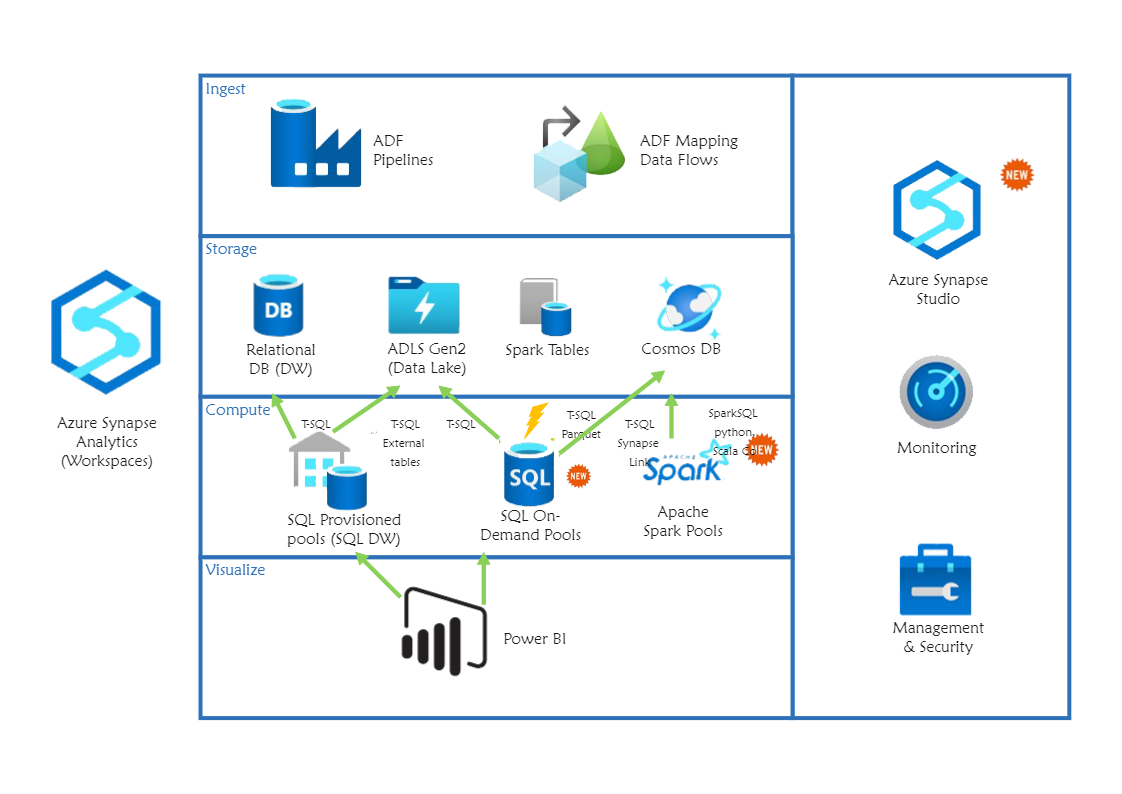

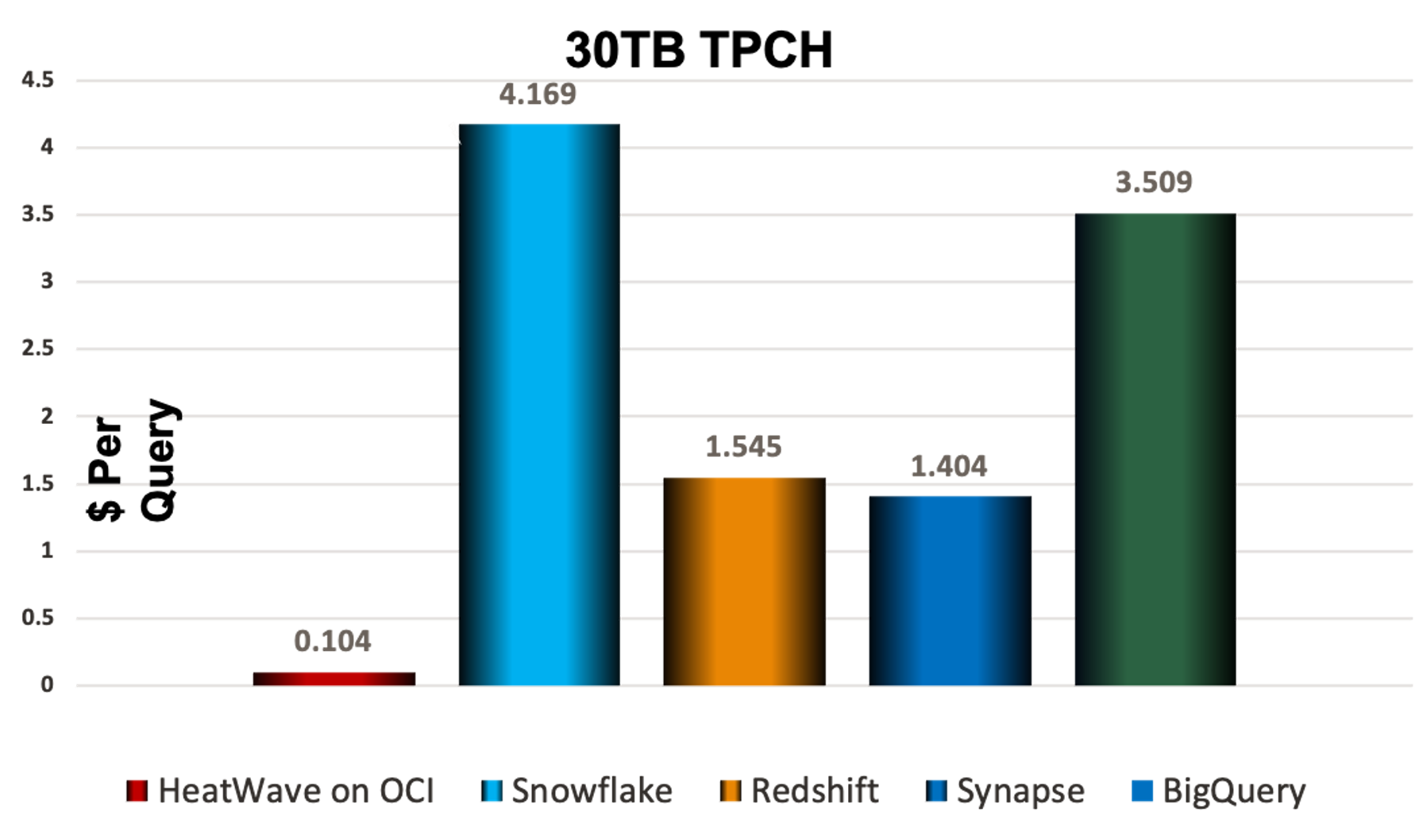

The rest of this post is specifically highlighting features which help us handle Big Data alongside a large volume of small/rapid data updates/inserts - but that's not to say that this is all we can do. We focus on providing the ideal solution for what we call " data-intensive apps". While there are certainly tradeoffs in every approach, I think you may be interested in how we handle this particular workload.įor anyone who is unaware, SingleStore is a scale-out multi-model database suited for both transactional and analytical workloads. In your comment you state "for example no database offers SQL on Big Data with large volume small/rapid data updates/inserts, because it's fundamentally impossible with current computer hardware". The team is already working on resolution. Both issues are noted and I have escalated your comments internally. U/MaxGanzII it's nice to see people bring this level of knowledge to a discussion! I am an engineer at SingleStore, and would like to clarify our architecture a bit and provide some followup resources to learn more.īut first, thank you for the candid feedback. My question is this! Has the world moved on and folks should not be querying files in data lakes? Is the native storage cloud data warehouses not the main thing anymore? As I mentioned, I don't have much data and I simply don't see an alternative to BigQuery when I look at costs. Azure Synapse Analytics has a dedicated SQL pool that gives native tables, but there is no free tier there either. Looking at AWS Redshift, there is no free tier so that is immediate more costly. I am noticing that AWS with Athena and Azure with Synapse Analytics are really promoting querying over data lakes instead of using native tables. More recently I've been looking at Azure and AWS as I seek to learn what the alternatives would be should a person similar to myself prefer a different cloud provider.

My BigQuery instance is ~5 GB of active storage and I stay within the free 1 TB query tier. Important note, I easily stay within BigQuery's free tier. It's been fantastic and has allowed me to build great data products in a free BI tool.

I then use Dataform (dbt competitor) to transform the data to create the reporting tables I need for my Google Data Studio reports. First time poster and big fan of this subreddit.įor the last 5 years I've been running lift and shift ETL to move data into BigQuery from my OLTP store (ODS using Google Cloud SQL PostgreSQL) or running batch ETL jobs that pull from other sources (Rest APIs) and import straight into BigQuery.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed